Modern unmanned ground systems (UGSs) may still look like vehicles, but they behave more like software systems with wheels.

That’s because mobility still matters, but it’s shaped less by chassis design and more by the built-in software components for sensing, navigation, communication, and autonomy.

The hardware sets the baseline. The stack determines how far it can go. And to understand why some systems hold up while others lose their footing, it helps to break the stack into core layers.

The Anatomy of Unmanned Ground Systems

Most UGS follow a similar architectural pattern: layered, interdependent, and only as strong as the weakest link.

Each layer plays a distinct role:

- Perception converts the environment into usable data

- Navigation maintains position and direction

- Communication keeps the system connected to operators and networks

- AI drives real-time decision-making and autonomy

- Power and mobility define operational limits

Individually, these components are well understood. Performance comes from how well they cohere under pressure.

The Perception Layer

Modern UGS are packed with perception sensors that continuously translate the physical world into structured inputs — terrain, obstacles, movement, heat signatures, etc.

A typical stack includes:

- LiDAR for spatial mapping

- Cameras for visual context.

- Radar or ultrasonic sensors for extra inputs.

- IMUs to track motion and orientation.

Using a combination of sensors compensates for individual bling spots. Cameras struggle in low light. LiDAR degrades in adverse weather. Radar trades precision for robustness.

For instance, Roboception combines 2D and 3D lidar, visible and infrared cameras, radar, and an inertial unit to support landmark detection, obstacle avoidance, pathfinding, and validation of autonomy software within its perception hardware for various robotic platforms.

Effectively, sensor fusion acts as a proxy for reliability, stitching together a more stable view of the environment.

Navigation and Localization Layers

Once the system can “see,” it needs to know where it is. That sounds straightforward until GPS becomes unreliable, which happens more often than most demos suggest.

Modern UGS hedge across multiple approaches. GNSS provides a baseline when available. SLAM builds maps on the fly. Inertial systems fill gaps when external signals drop. These methods are designed to overlap, maintaining continuity when conditions degrade, since each sensor performs unevenly depending on the environment.

A group of researchers recently tested how a combined setup can improve UGS navigation

under different environmental conditions. The platform was equipped with lidar, radar, and RGB-D cameras that autonomously switched sensor/SLAM strategies based on the current weather:

- Camera-based SLAM — in good daylight

- Camera + LiDAR fusion — during nighttime

- Radar SLAM — in rain or fog

By matching the method to the environment (based on live weather data), the system maintained above-average accuracy across changing conditions.

AI and Autonomy Layer

Perception and navigation generate data. AI decides what to do with it.

This is where UGS start to feel less like remote tools and more like semi-independent systems. Path planning, obstacle avoidance, terrain adaptation — these functions shift routine decisions away from the operator.

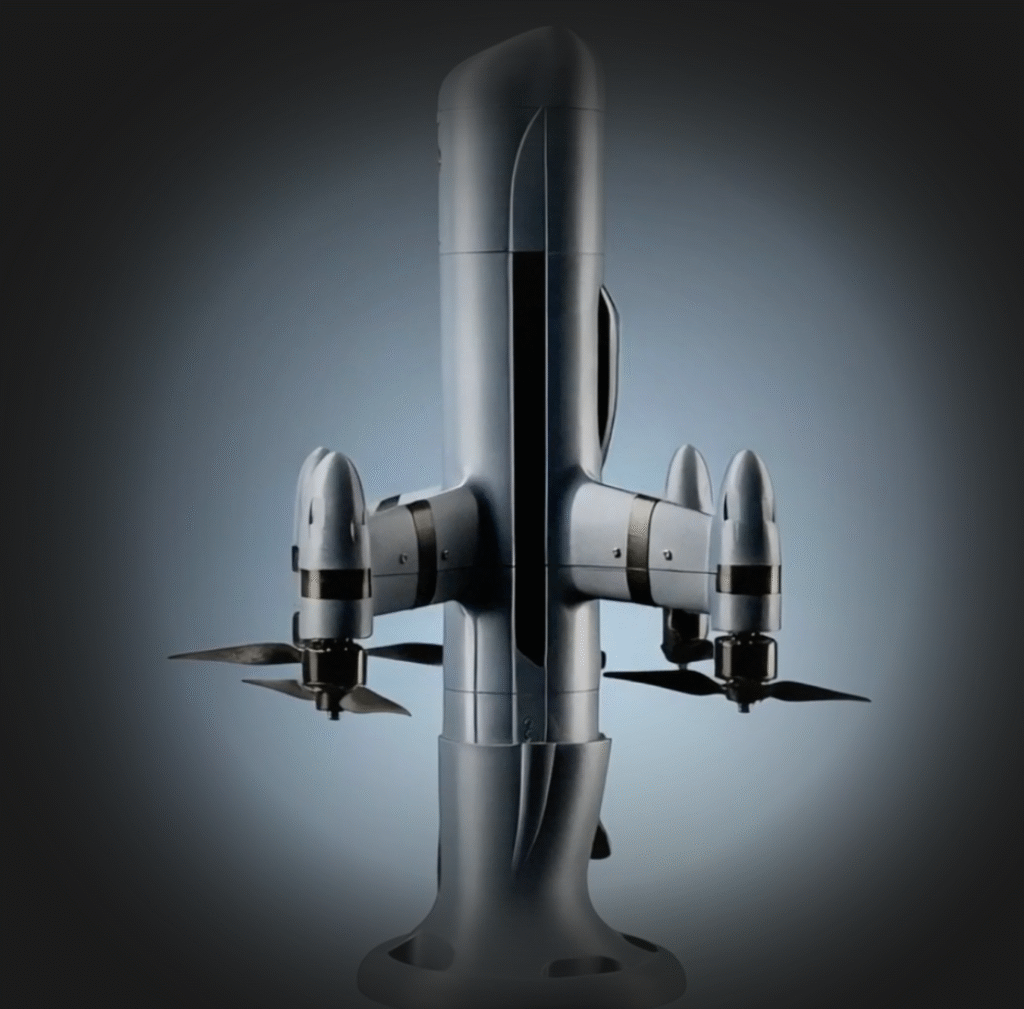

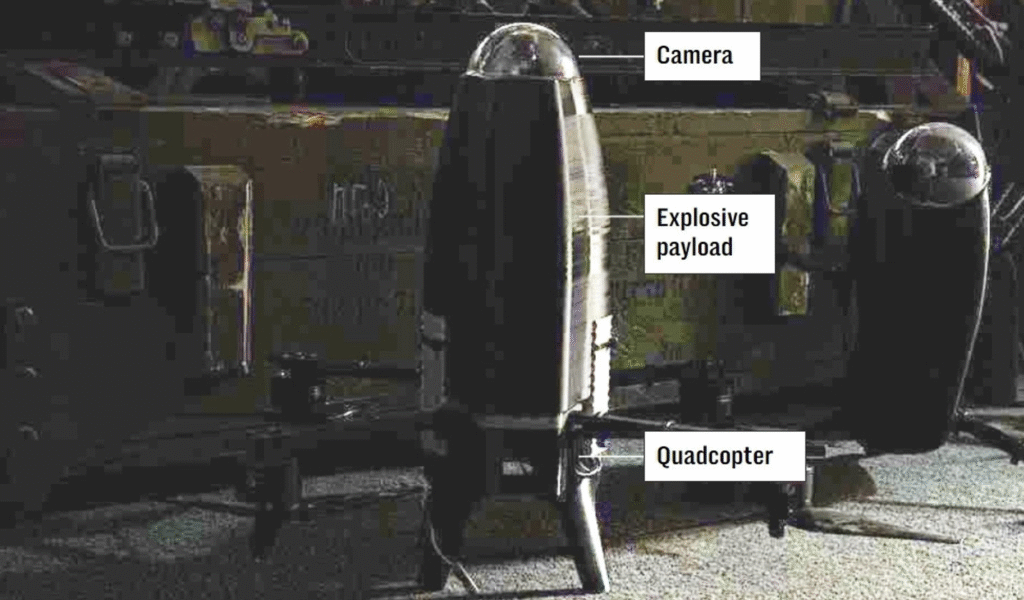

Autonomy tends to sit on a spectrum. Some systems still require hands-on control. Others handle defined tasks with minimal input. For example, Elbit ROOK UGV can efficiently navigate rough terrain, during both day and night, to deliver supplies and perform intelligence gathering missions (including by dispatching on-board VTOLs). Leonidas Autonomous Ground Vehicle, in turn, has autonomous mobile counter-UAS capabilities. It can deploy to pre-planned intercept points or maneuver across a perimeter to protect critical assets from incoming attacks.

Communication and Control Systems

Unmanned ground systems’ autonomy often gets most of the attention, but it’s connectivity that makes it possible.

Communication systems link the operator, the vehicle, and the wider mission environment. When that link weakens, visibility drops, and control becomes reactive. Most stacks rely on RF links, sometimes extended through mesh networks or relays. Yet each option introduces trade-offs in latency and coverage.

The constraints are familiar. Signal loss in complex terrain. Interference in contested environments. Range limits without infrastructure.

Then there’s the control layer. Interfaces shape how quickly operators can interpret and act. Poor design here can blunt even a technically strong system. Capability without usability rarely holds up in real conditions.

Why Integration Is Becoming the Deciding Factor for UGSs

Each layer is mature enough in isolation. Integration is where outcomes diverge.

UGS operates in environments where visibility is partial, communication is inconsistent, and conditions shift quickly. Systems need to coordinate across these constraints in real time.

That’s pushing operations toward orchestration across domains.

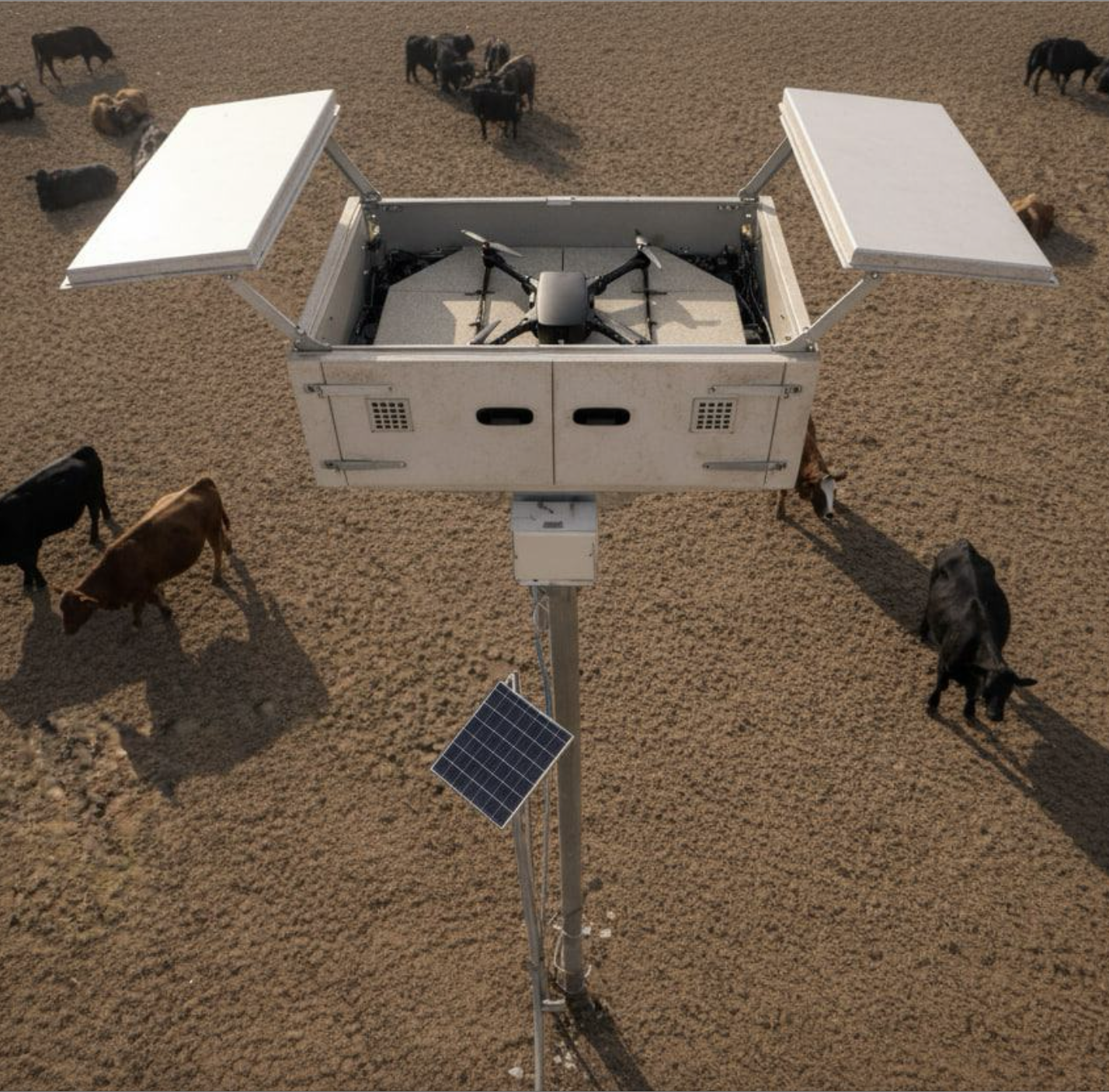

Ground systems handle execution. Aerial systems extend visibility and act as communication relays. AI coordinates both into a shared operational picture. In this vein, platforms like Osiris DroneOS focus less on individual vehicles and more on stitching systems together. The value comes from extending awareness, stabilizing connectivity, and aligning decision-making across assets.

The shift is subtle but consequential. Better components help. Integrated systems change how operations run.

And that’s where the category is heading.